Deploy AI agents

as production services

Run agents up to 100x cheaper.

Deploy any Python agent in one click with automatic scale up and scale down, built-in security, hosted memory, and zero vendor lock-in.

Maintain complete ownership of your code with no vendor lock-in. Package with open-source dank-py and deploy with Dank Cloud.

Open Source & Ownership

Keep full ownership of your agent code

Dank Cloud is built on an open-source packaging engine and keeps your deployment model portable. Use our managed platform for speed, while preserving the flexibility to run your packaged agents anywhere.

Open-source runtime

Delta-Darkly/dank-py

Portable artifacts

Container-first packaging keeps deployment options open.

No lock-in

Your source code and runtime contract stay under your control.

Elastic by default

Scale up on demand and scale down when traffic cools.

Secure runtime

Isolated agents with encrypted secrets and endpoint auth.

Framework Agnostic

Build with any agent framework

Dank Cloud supports framework and framework-free Python agents through one universal invocation contract. Deploy and operate them the same way no matter how they are built.

AutoGenSupported

AutoGenSupported PydanticAISupported

PydanticAISupported HaystackSupported

HaystackSupported AutoGenSupported

AutoGenSupported PydanticAISupported

PydanticAISupported HaystackSupported

HaystackSupported AutoGenSupported

AutoGenSupported PydanticAISupported

PydanticAISupported HaystackSupported

HaystackSupported AutoGenSupported

AutoGenSupported PydanticAISupported

PydanticAISupported HaystackSupported

HaystackSupported LlamaIndexSupported

LlamaIndexSupported CrewAISupported

CrewAISupported DSPySupported

DSPySupported MastraSupported

MastraSupported LlamaIndexSupported

LlamaIndexSupported CrewAISupported

CrewAISupported DSPySupported

DSPySupported MastraSupported

MastraSupported LlamaIndexSupported

LlamaIndexSupported CrewAISupported

CrewAISupported DSPySupported

DSPySupported MastraSupported

MastraSupported LlamaIndexSupported

LlamaIndexSupported CrewAISupported

CrewAISupported DSPySupported

DSPySupported MastraSupported

MastraSupportedUniversal Python Runtime

Available now

Deploy LangChain, LangGraph, CrewAI, and custom Python agents through one standardized deployment and invocation path.

Start FreeOpen Source, No Lock-In

Built on dank-py

Keep full ownership of your code and packaging workflow through the same standardized runtime contract, with full portability over your deployment artifacts.

View GitHubQuick Start

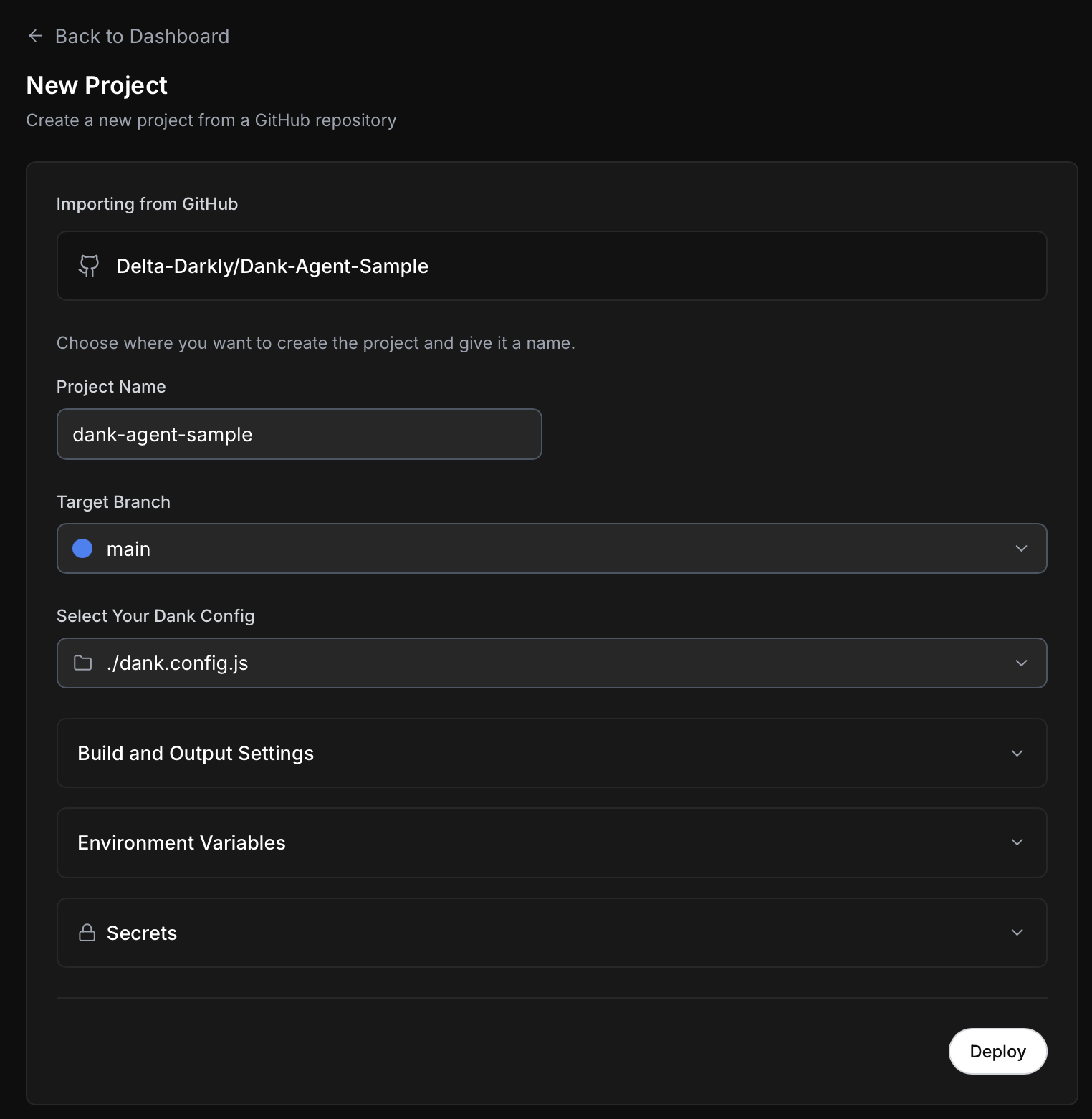

Deploy in 3 steps

From GitHub repo to production endpoint in under 5 minutes

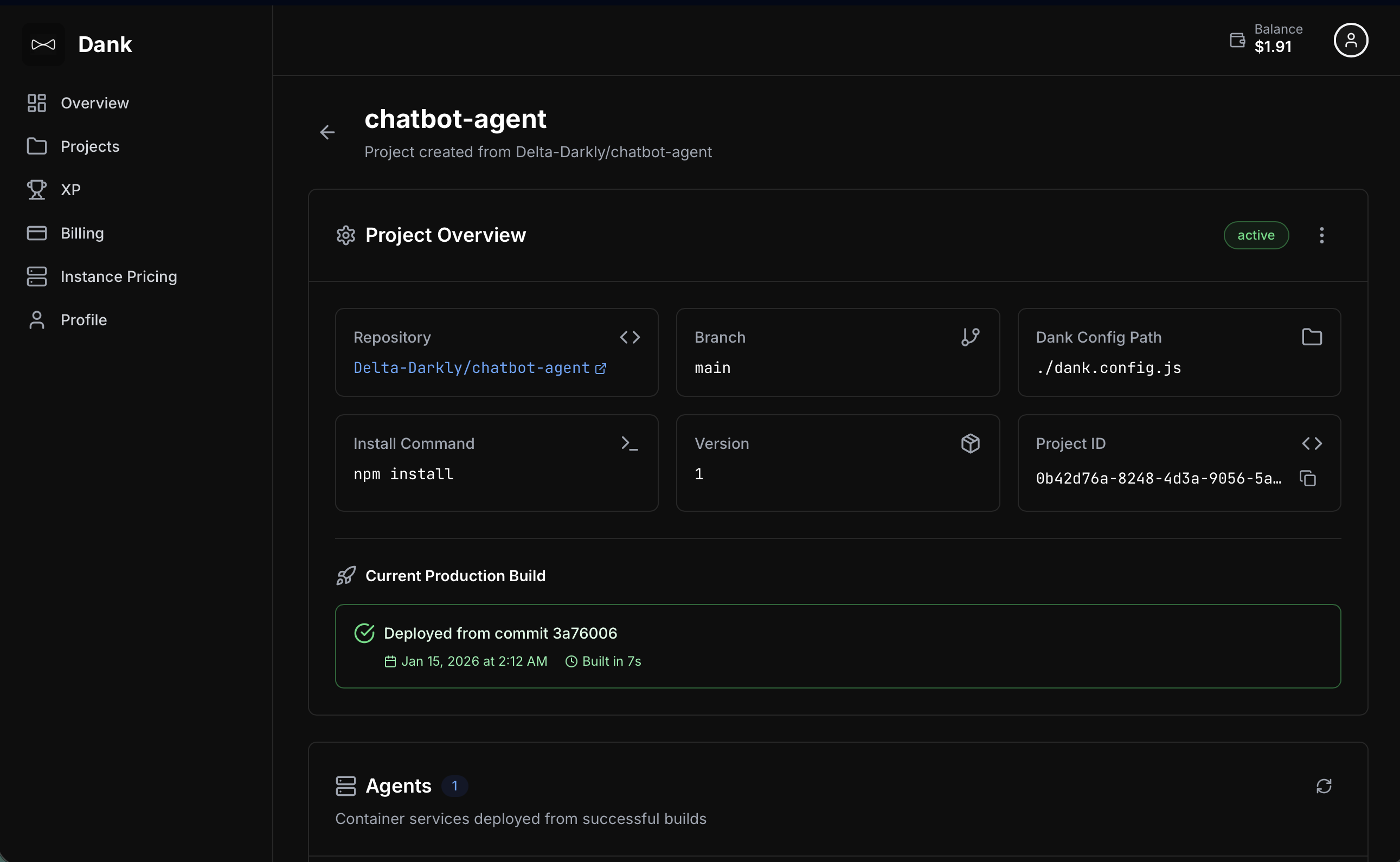

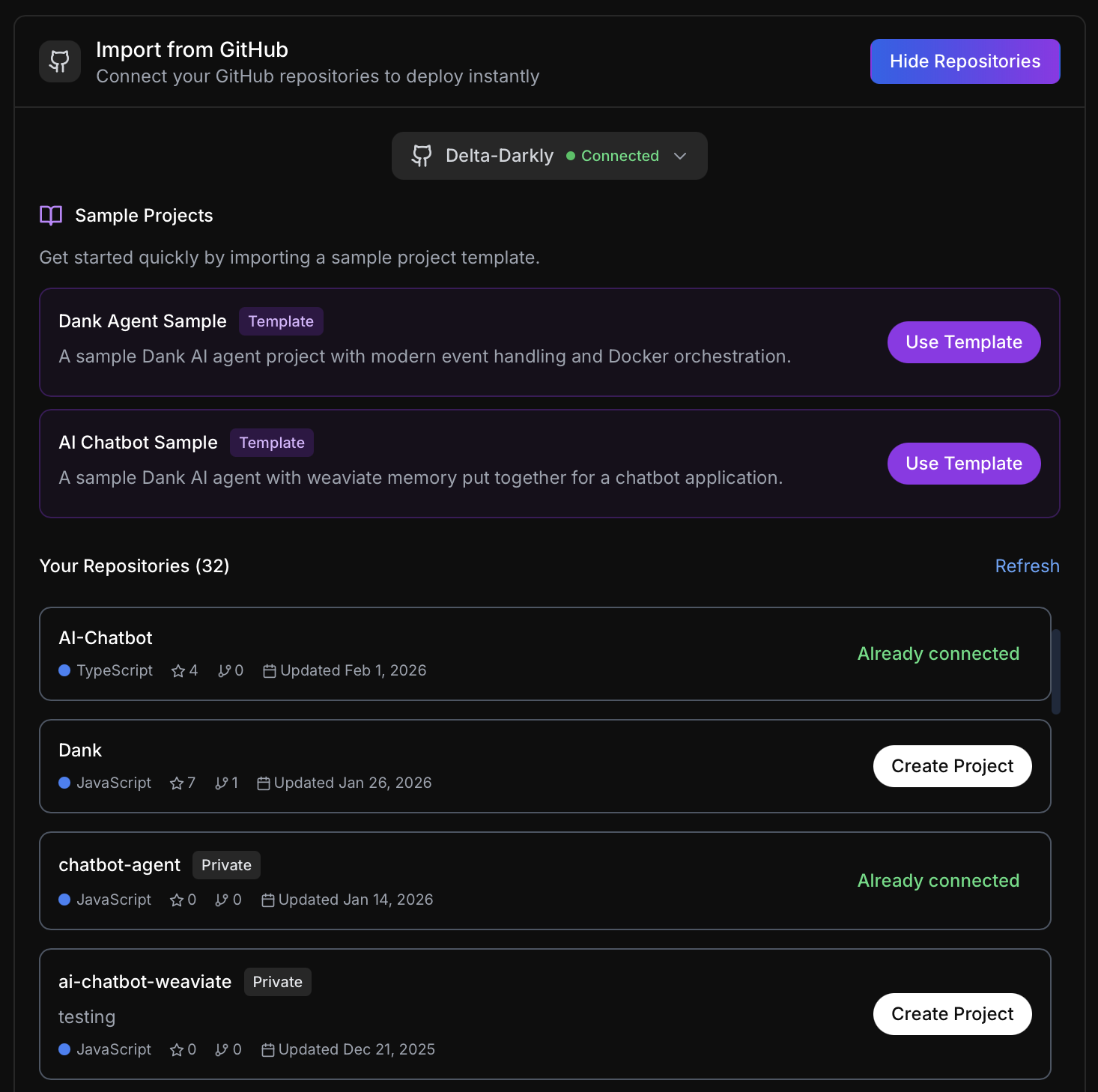

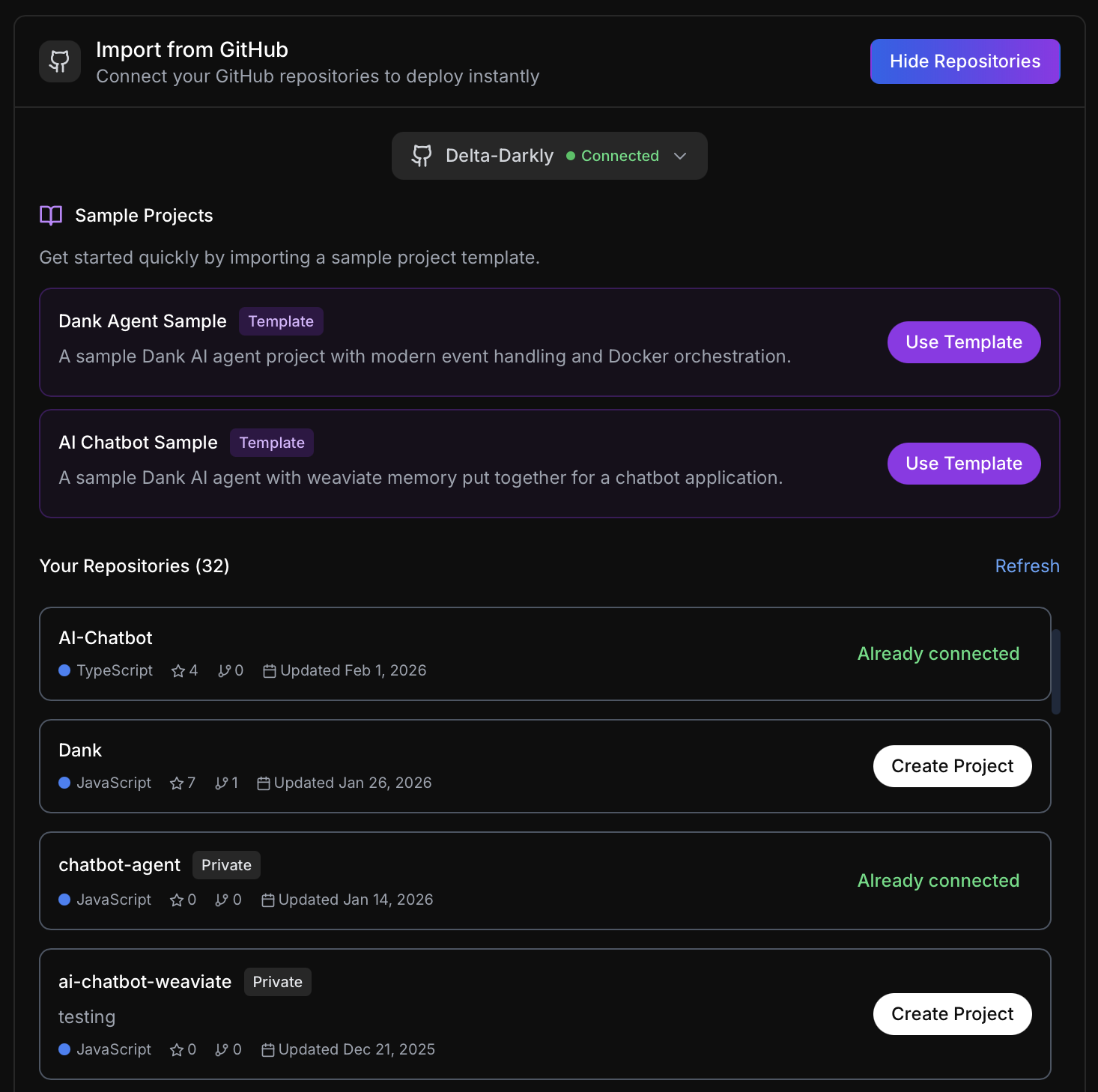

Connect GitHub

Connect your GitHub repo in one click. Dank Cloud detects your agents and their configuration automatically.

Configure

Select which agents to deploy. Set environment variables, secrets, and resource allocation. Everything is configurable from the dashboard.

Deploy

Click deploy. Each agent launches as its own secure, API-addressable service in seconds.

Platform Features

Everything you need to ship agents

We handle the annoying scalable infrastructure—deployment, routing, auth, logs, memory—so you can focus on building great agents.

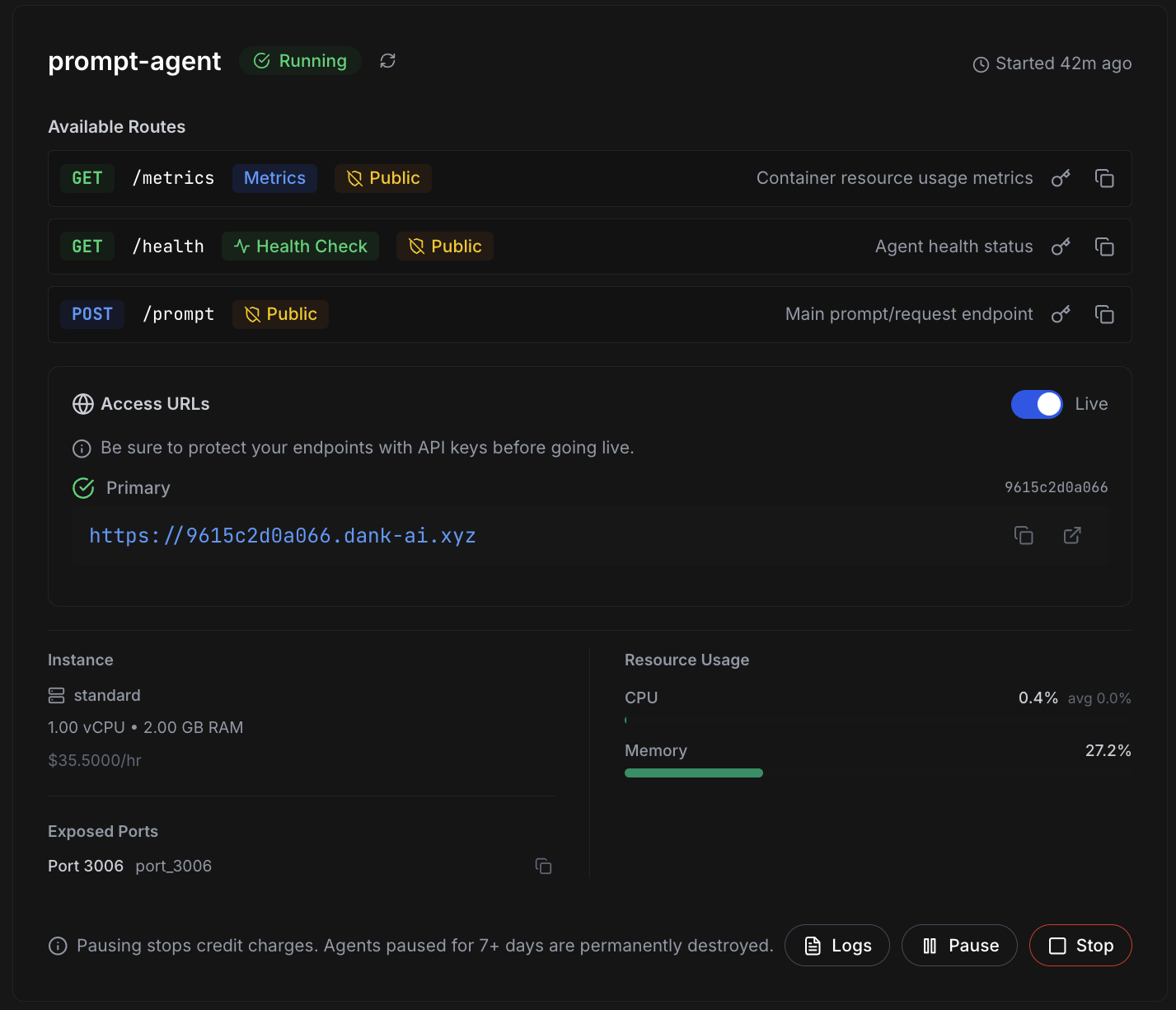

Agent Management

Full control over every agent

Manage every aspect of your deployed agents through an intuitive dashboard. Monitor performance, configure resources, and optimize utilization in real-time.

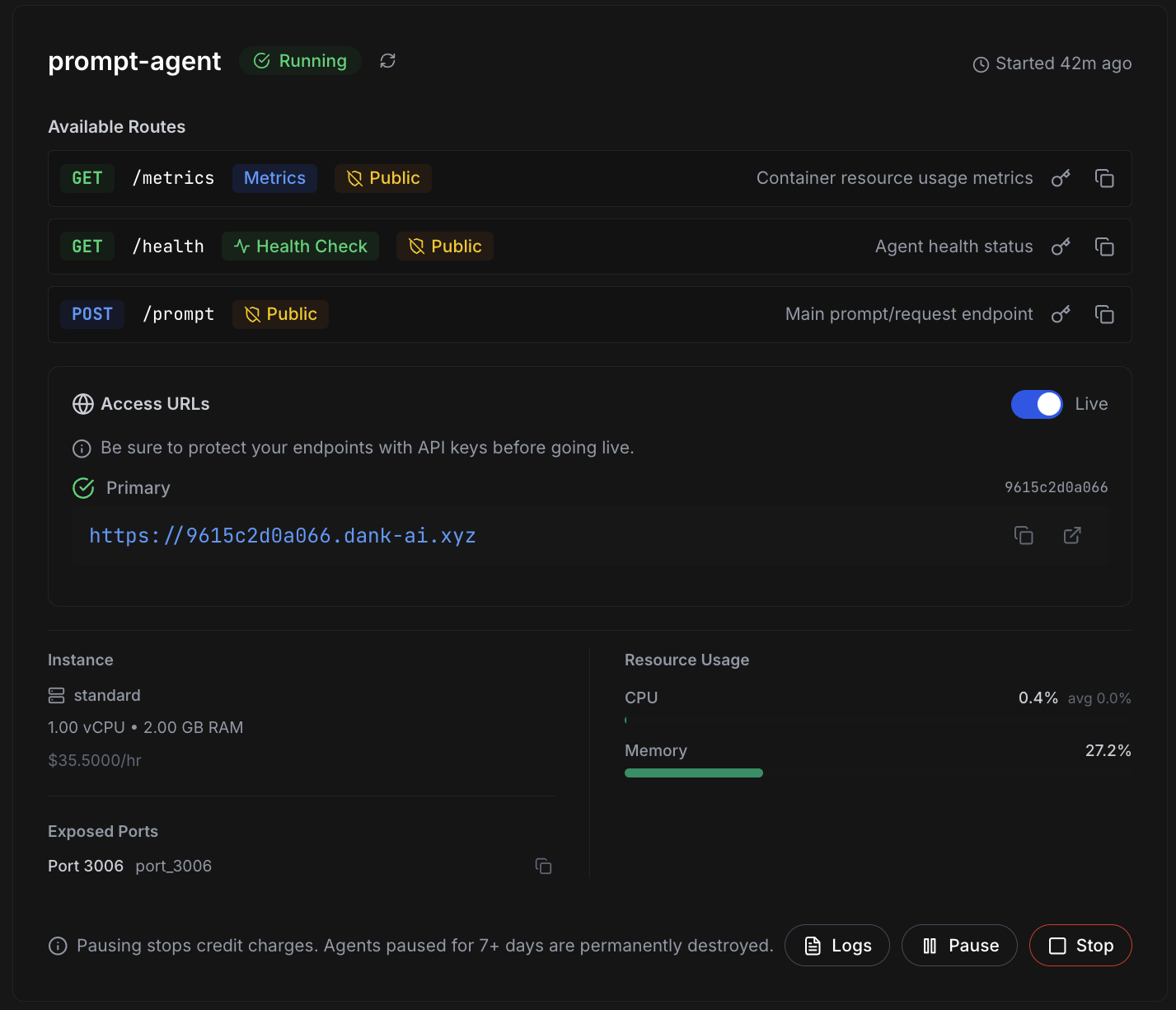

Resource allocation

Set CPU, memory, and instance size per agent. Scale resources based on workload requirements.

Real-time monitoring

Track agent status, uptime, and health metrics. Know exactly when something needs attention.

One-click actions

Start, stop, restart, or redeploy agents instantly. Full lifecycle management from the dashboard.

Available Endpoints

GET /healthGET /metricsPOST /promptExample Request

curl -X POST "https://<agent-id>.ai-dank.xyz/prompt" \

-H "Content-Type: application/json" \

-H "Authorization: Bearer <YOUR_API_KEY>" \

-d '{"prompt": "Explain how transformers work."}'Dedicated Endpoints

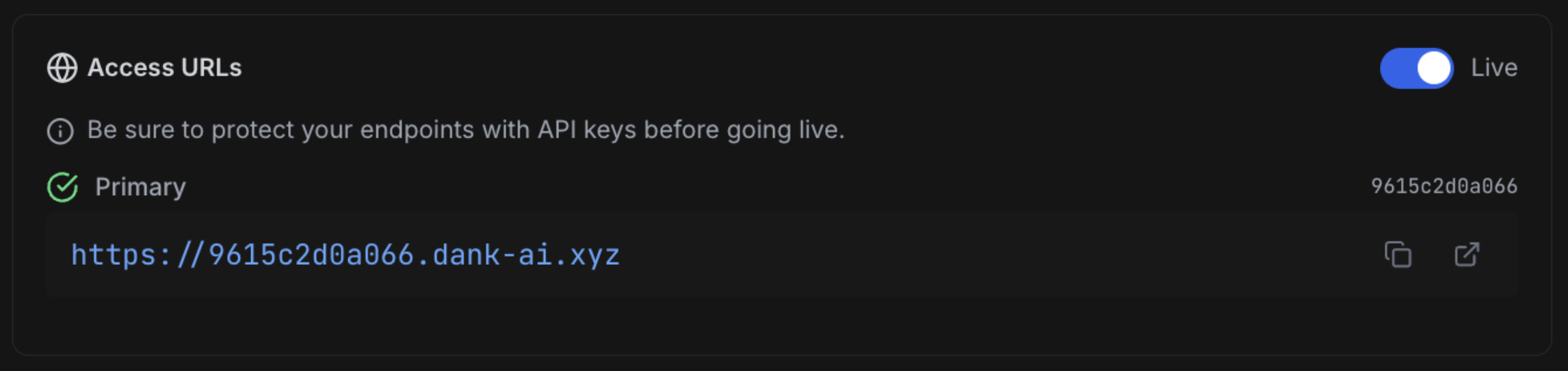

Each agent gets its own domain

Deploy and go straight to production with stable, agent-specific endpoints. Each agent is instantly accessible via its own dedicated HTTPS URL.

Instant production URLs

Every agent gets a unique subdomain. No DNS configuration required.

SSL/TLS by default

All endpoints are secured with HTTPS automatically. No certificate management needed.

Direct HTTP access

Call agents directly via REST API. No routing configuration or gateway setup.

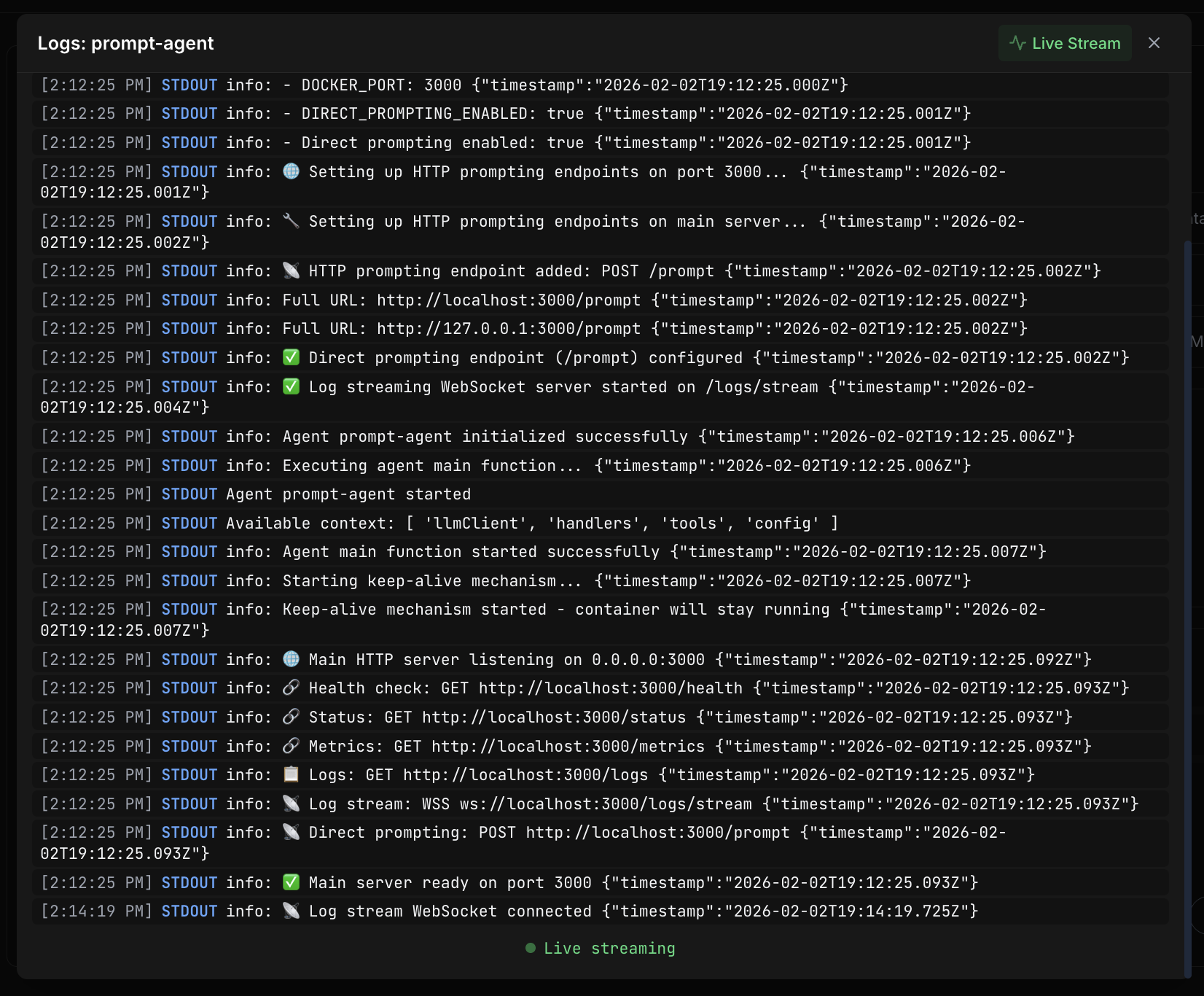

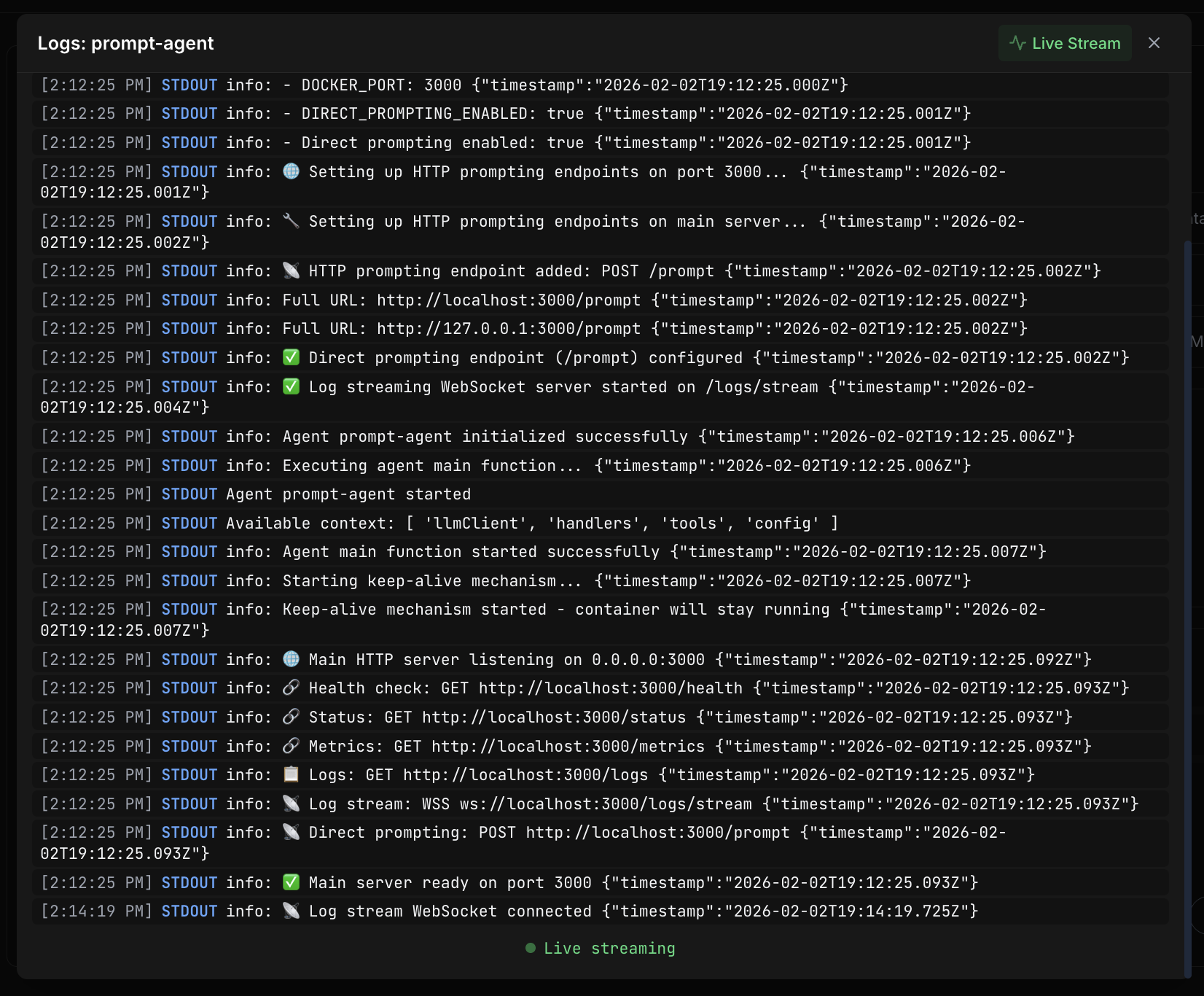

Observability

Per-agent logs and visibility

Clear, isolated logs for each agent. See exactly what each agent is doing, when it fails, and why. No more digging through mixed backend logs.

Isolated log streams

Each agent has its own dedicated log stream. Filter and search per-agent.

Real-time streaming

Watch logs as they happen. Debug issues immediately during development.

Tracing

End-to-end request tracing across agent calls in multi-agent workflows.

Integrations

Powerful integrations, zero setup

Connect your tools and services seamlessly. We've built deep integrations so you don't have to.

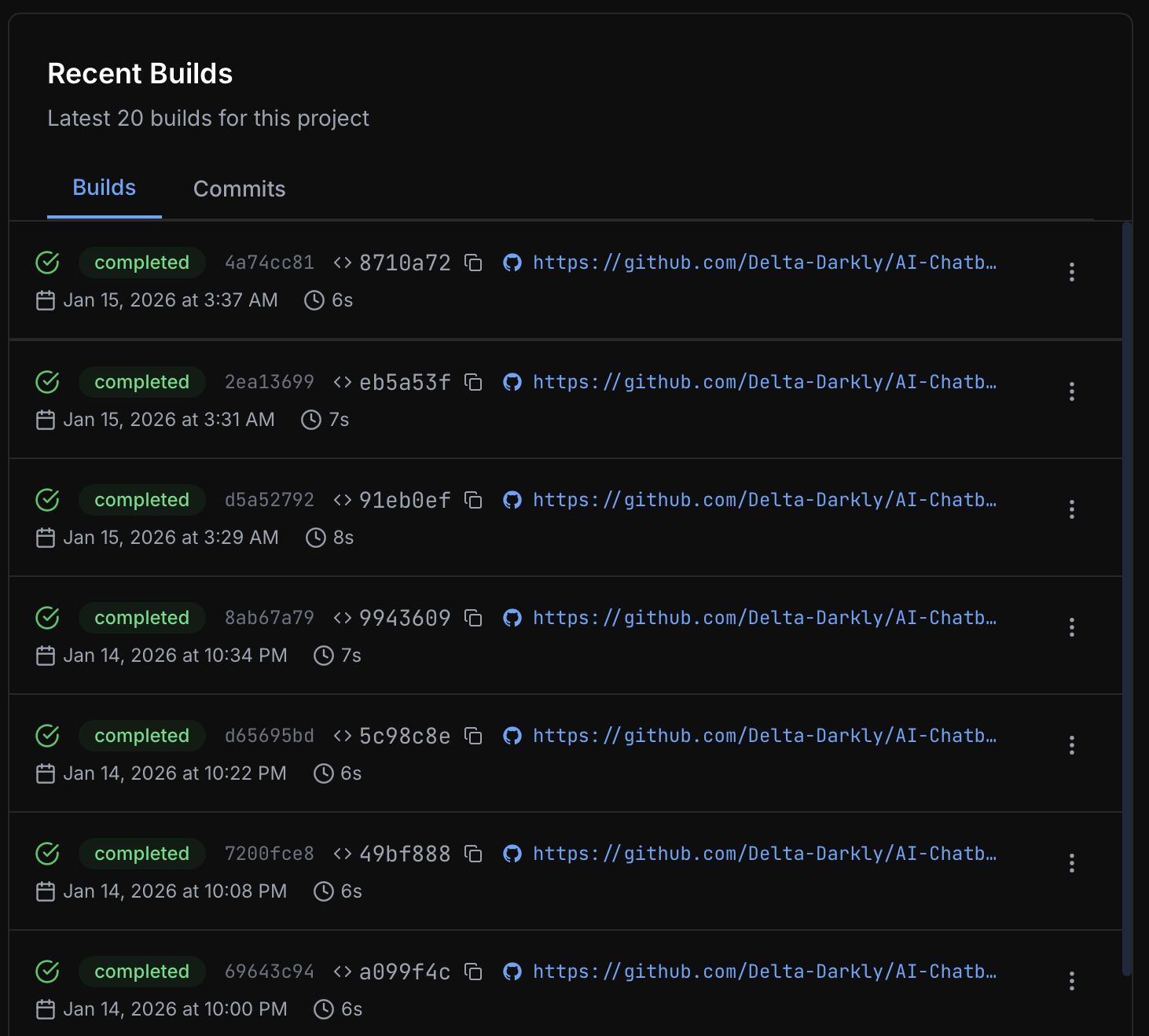

GitHub Integration

Push to deploy, automatically

Connect your GitHub repository and get full CI/CD out of the box. Every push to your branch triggers an automatic rebuild and redeployment.

Auto-redeployments

Push to your branch, agents redeploy automatically. Zero manual deployment steps.

Fast builds

Optimized Docker builds complete in seconds. Get from code to production faster.

Build logs

Real-time build output and deployment history. Debug build failures instantly.

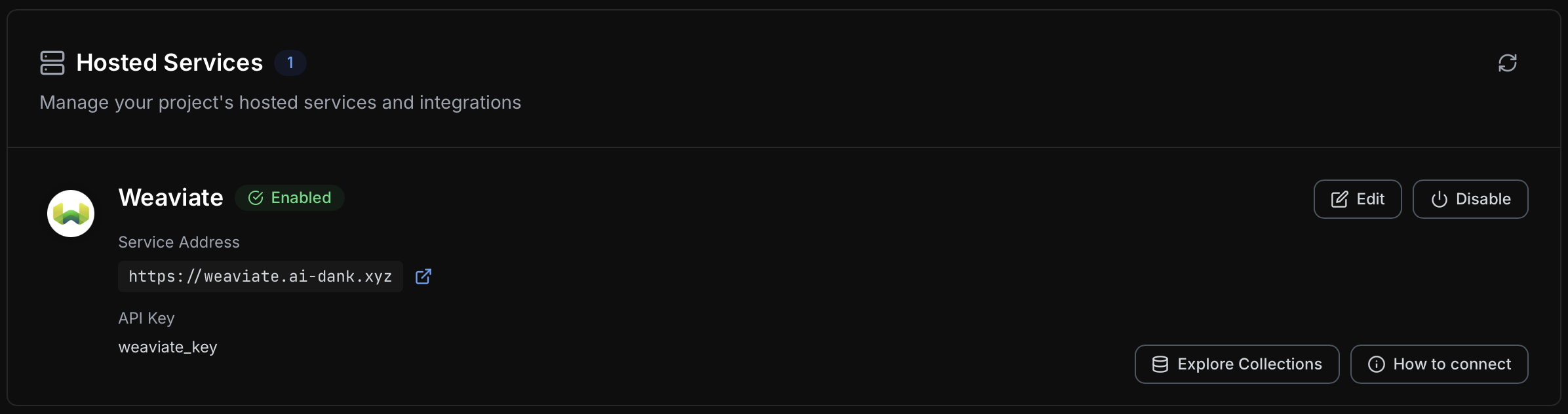

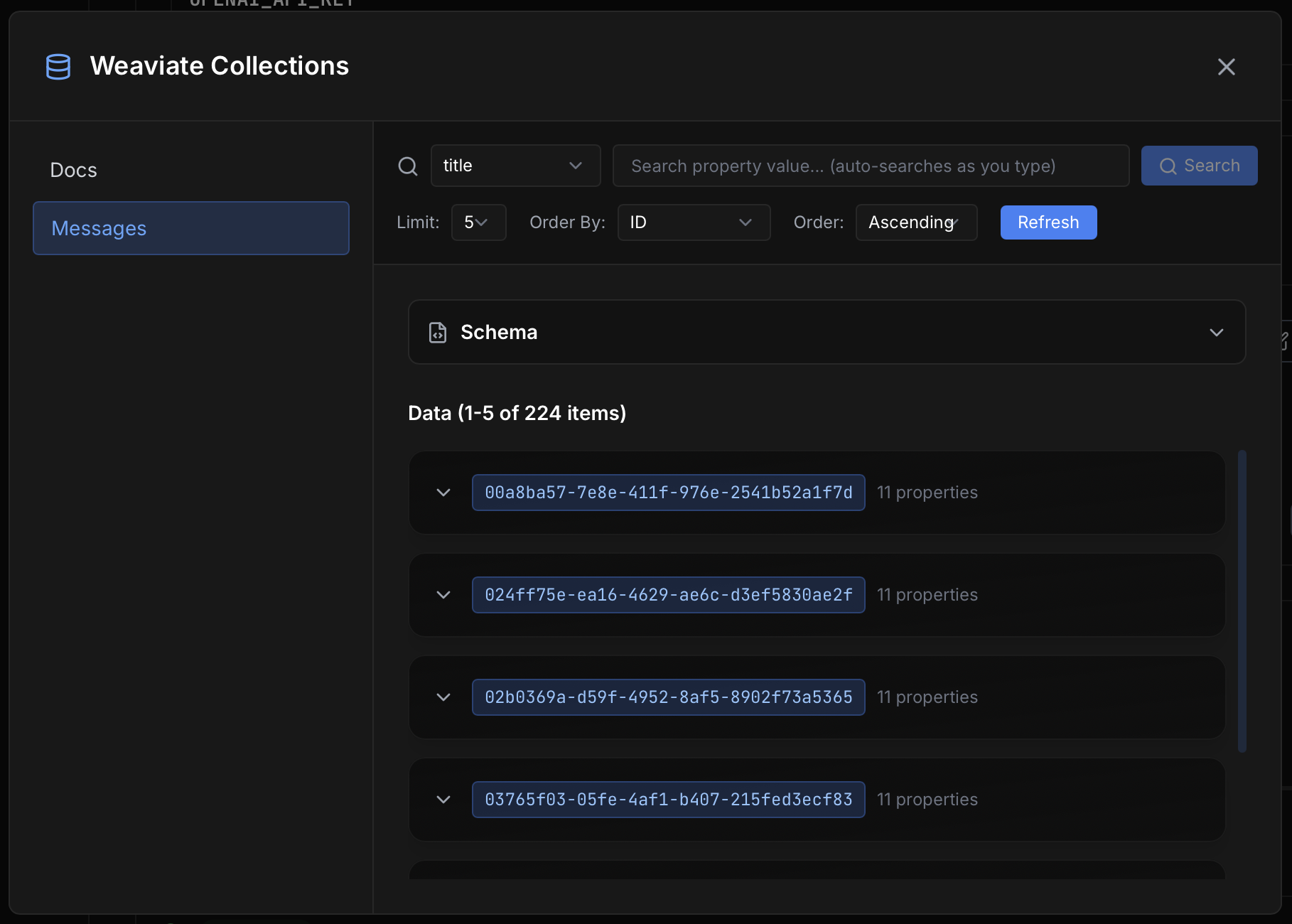

Vector Memory

Built-in memory for your agents

Every agent comes with a production-ready vector store out of the box. Store and retrieve memory, embeddings, and context without provisioning or operating a database.

Vector storage

Store embeddings for RAG, semantic search, and long-term agent memory.

Fast similarity search

Query vectors with low latency. Weaviate handles indexing automatically.

No setup required

Pre-configured and ready to use. Just connect from your agent code.

MCP Deployment

Offload tools and heavy compute to MCP services

Keep agent containers lean by deploying shared tools and high-compute integrations as dedicated MCP services. This separation improves isolation, scalability, and cost efficiency under real traffic.

Dedicated tool plane

Run shared tools once and let multiple agents invoke them safely.

Lighter agent runtimes

Keep core agents focused on reasoning while MCP services handle heavy lifting.

Composable architecture

Build distributed AI systems with clear service boundaries and cleaner observability.

Security

Built-in authentication & secrets

Secure your agents with API keys and encrypted secrets. No custom auth or credential plumbing required.

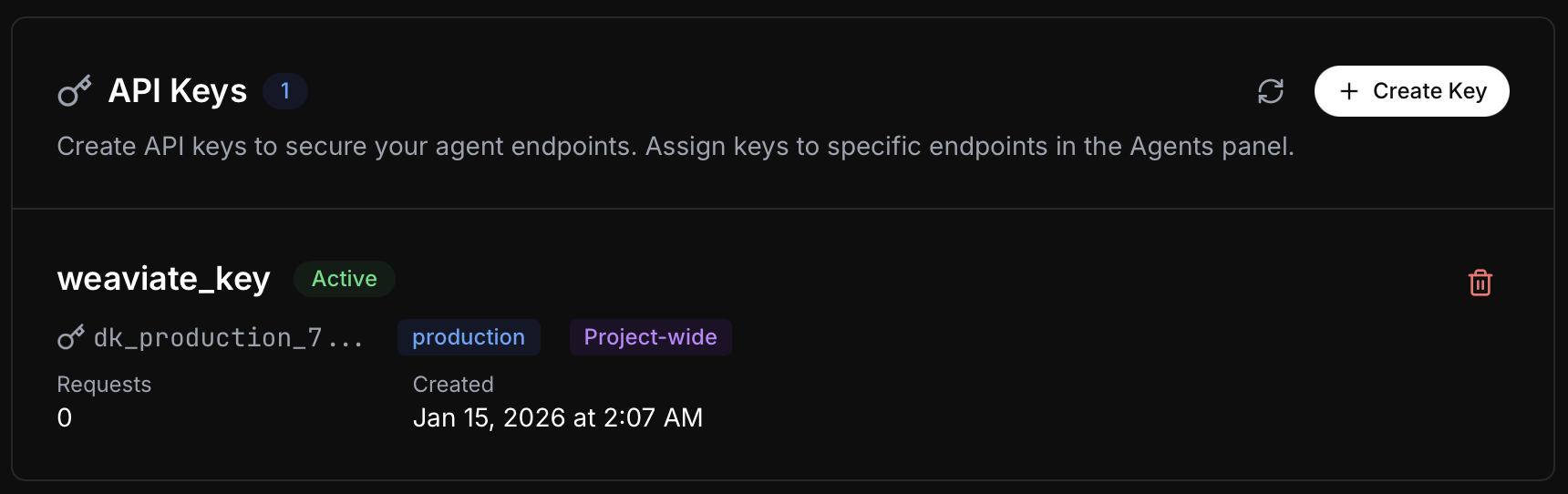

API key management

Generate and manage API keys per agent. Control who can access each endpoint.

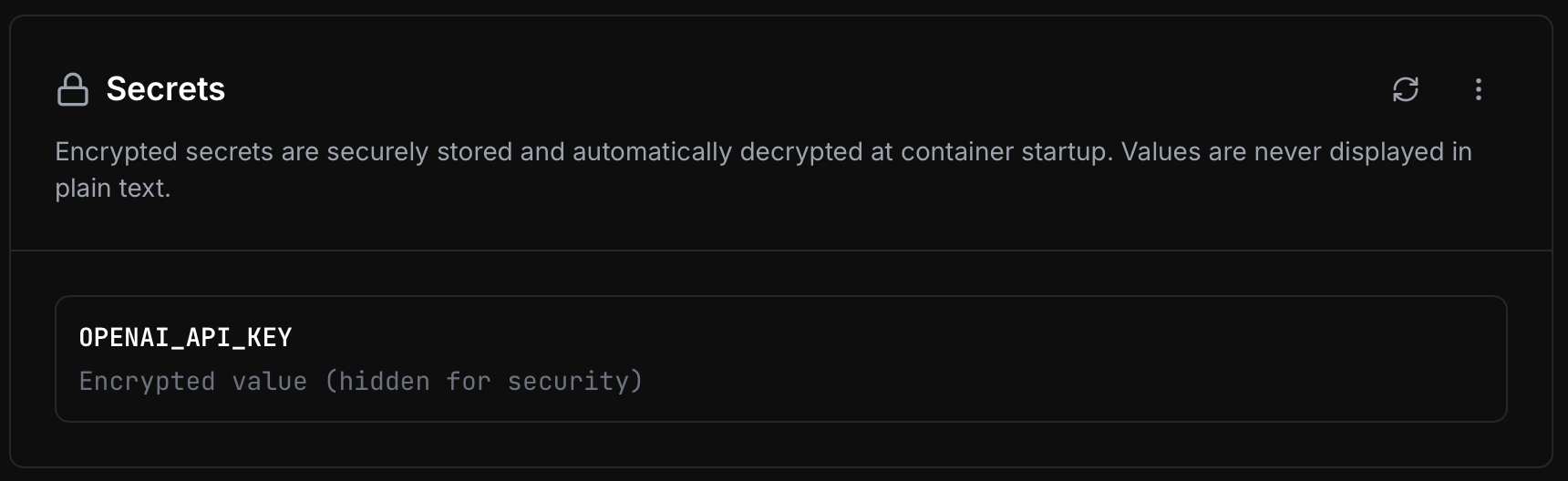

Secrets management

Store API keys, tokens, and credentials securely. Secrets are encrypted at rest and injected into agents at runtime.

Environment variables

Set environment variables in the dashboard. Apply changes on deploy, no code changes required.

Architecture

Each agent runs independently

Most platforms run all agents in a shared runtime. Dank deploys each agent as its own service, so failures are isolated, scaling is elastic, and logs are clear.

Shared Fate

All agents share one runtime. One crash kills all. Limited visibility makes debugging harder.

Scaling Requires Restart

When one agent needs more resources, you must shut down everything and redeploy.

No Way to Scale Down

Once upgraded, you're stuck paying for oversized specs even when traffic cools.

Isolated Failures

Each agent runs in its own container. If one crashes, the others keep running. Auto-restart in the background.

Elastic Scaling

Each agent automatically scales independently based on load. Scale up when hot, scale down when idle. Pay only for what you use.

Built-in Routing & Tracing

Requests are automatically routed to available agents to distribute load. Trace every step of multi-agent requests end-to-end.

Pricing

Simple tier pricing with usage-based overage

Every request counts as one request. Time above 30 seconds is metered as overtime seconds. Free includes cold starts. Plus and Pro remove cold starts.

Free

Great for experimentation

$0

per month

Plus

Production-ready usage

$25

per month

Pro

High-throughput deployments

$99

per month

Pay-as-you-go overage pricing

Ready to deploy?

Deploy stateless agent microservices with the economics, security, and flexibility required for production.